A while ago I was researching JA3 hashes and how it may help with bot mitigation. The first problem I met - even if many services implement hash calculation mechanism, there is no good database applicable as feed, so I decided to try to make one. Here you can find answer for some questions: what it is? how it works? how is it useful?

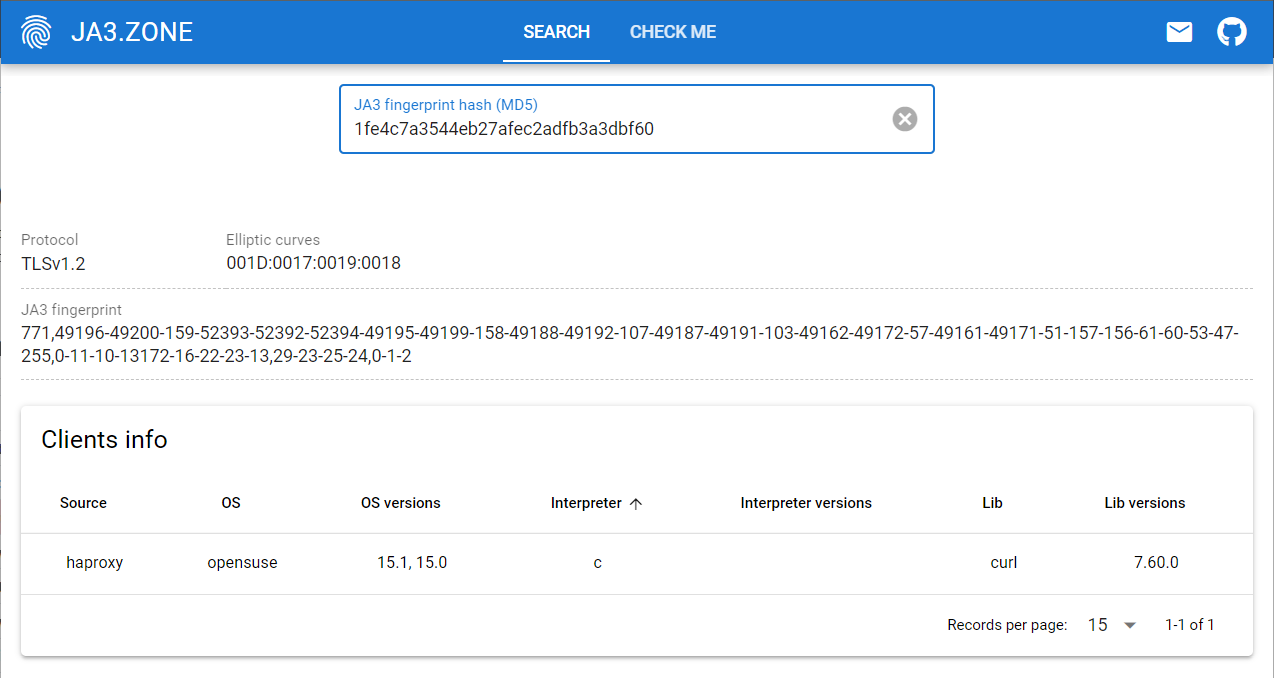

Spoiler: I did it, and you can check results here: ja3.zone.

JA3… what?

Just skip this part if you already know, what JA3 is and how it works. For the rest, in a nutshell, it is a method of fingerprinting clients (JA3) or servers (JA3s) based on SSL/TLS handshake.

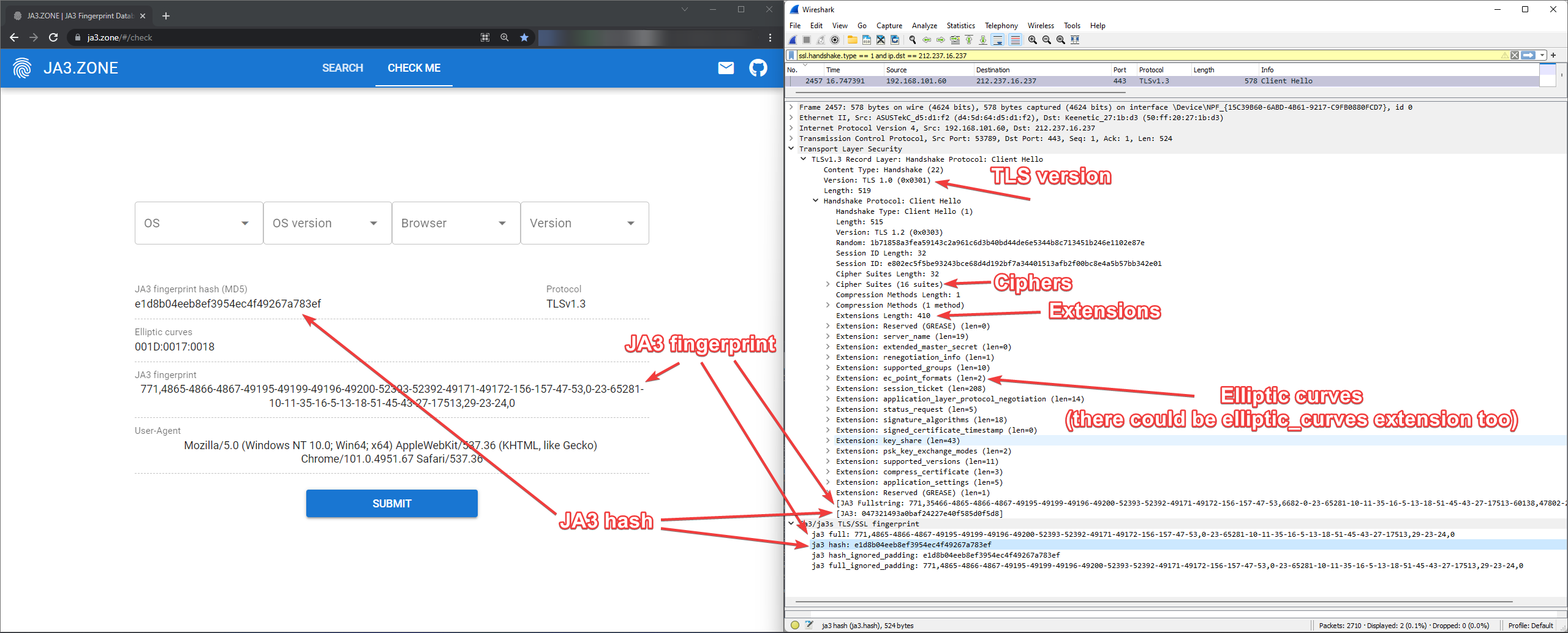

Long story short - when client connects to some website via HTTPS it sends lot of information:

- TLSVersion: which protocol it would like to use, e.g. TLSv1.2

- Ciphers: supported cipher list, e.g.

Cipher Suite: TLS_AES_128_GCM_SHA256 (0x1301)

Cipher Suite: TLS_AES_256_GCM_SHA384 (0x1302)

etc. - Extensions: supported extensions, e.g.

Extension: server_name

Extension: session_ticket

etc. - EllipticCurves/EllipticCurvePointFormats: elliptic curves configuration, e.g.

Elliptic curve: secp256r1 (0x0017)

EC point format: uncompressed (0)

etc.

We collect all of that, and check items for presence and order in the lists. Then data is converted human-readable values as bytes (decimal) to something like that:

JA3 hash is MD5 hash of fingerprint (the value we just got). Quite same approach is applicable not only for clients, but for servers as well. However, we don't need it for our use case and won't use it for now.

You can find more detailed description here or check your JA3 hash here (and maybe even submit it to DB?... Right?).

Whoa-whoa! Wait a minute…

I'm sure, you have already noticed, that there are several different JA3 hash values on screenshot above. But why do they differ if request is the same?

The answer is pretty much simple - there are different implementations of hash calculations algorithm, e.g. one does not remove GREASE cipher suites, the other one does, the third one also removes padding extension. In the end we get different hashes, and that makes things worse for building DB, which should contain all possible combinations.

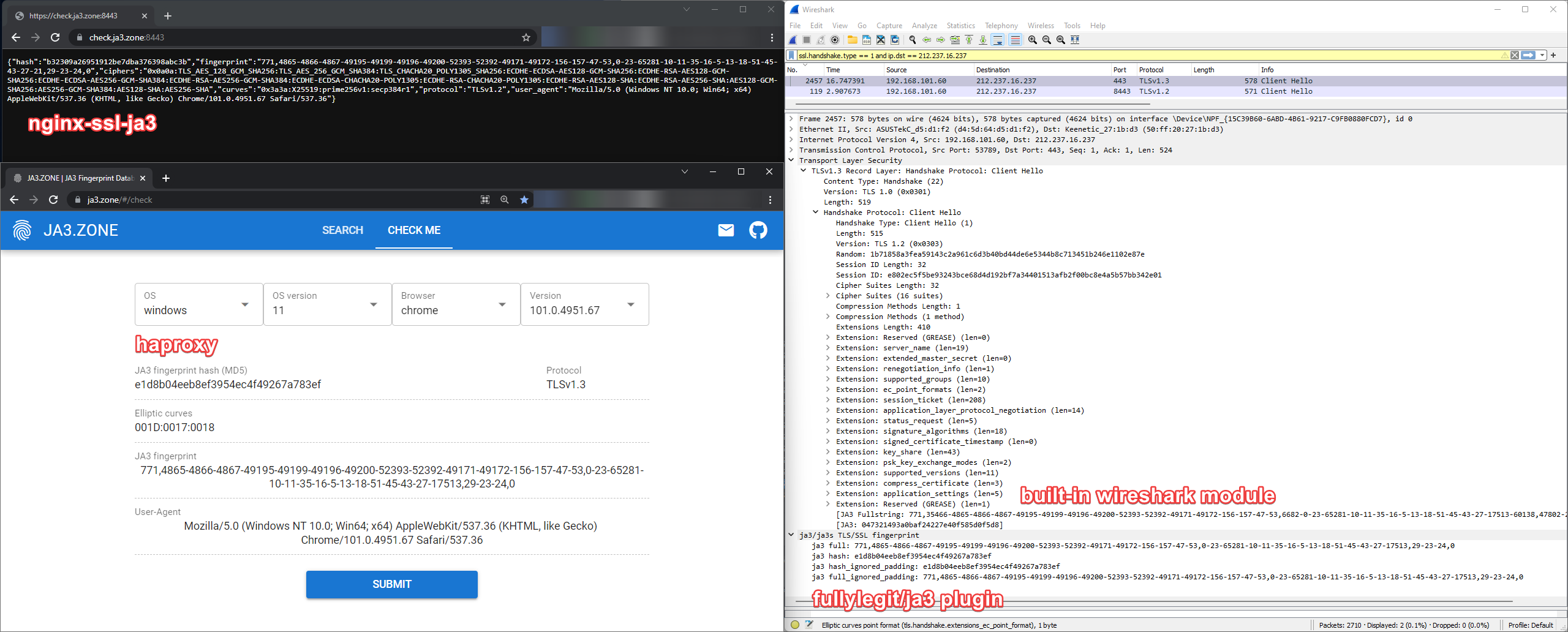

Let's assume, we are focused on bot protection. Even for this use case we have different hashes in wireshark (built-in module), wireshark ja3 lua plugin (fullylegit/ja3), haproxy and nginx-ssl-ja3 module.

So, we got:

- 047321493a0baf24227e40f585d0f5d8: built-in wireshark

- e1d8b04eeb8ef3954ec4f49267a783ef: wireshark lua plugin and haproxy

- b32309a26951912be7dba376398abc3b: nginx-ssl-lua module

And which of them we should consider as correct one?

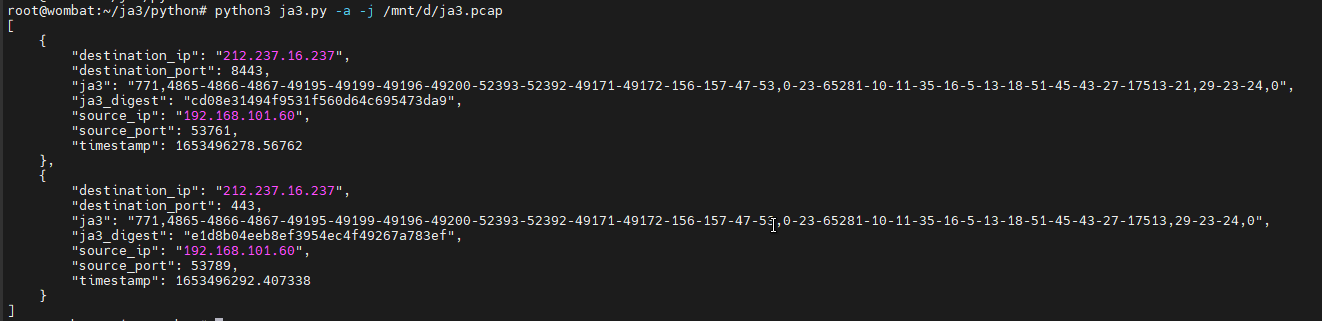

Let's take python implementations right from salesforce/ja3 repository and check recorded PCAP file:

So now we know:

- nginx-ssl-ja3 module intends to be cd08e31494f9531f560d64c695473da9

- seems that somehow client used different SSL configuration while connecting to nginx-ssl-ja3 and haproxy. But even then nginx module has calculated hashes differently (wrong?)

- built-in wireshark module does not remove GREASE ciphers/extensions/curves (found reported issue)

- JA3 python implementation, lua wireshark plugin and haproxy are doing fine

Which JA3 implementation are we going to use then? I say both: nginx-ssl-ja3 and haproxy. If we build DB just for haproxy, it would be useless for nginx and vice versa. Besides, for now nginx-ssl-ja3 is the only way to implement JA3 hashes in nginx.

Just to note: nginx-ssl-ja3 developer (fooinha) is awesome and has fixed issue with particular case in couple days. I hope and believe that eventually this tool would work as expected and will calculate all hashes correctly.

UPDATE: seems, that all issues with wrong hash were fixed in recent commits (82efc5a), so builds after 05/31/2022 should work fine.

Existing solutions

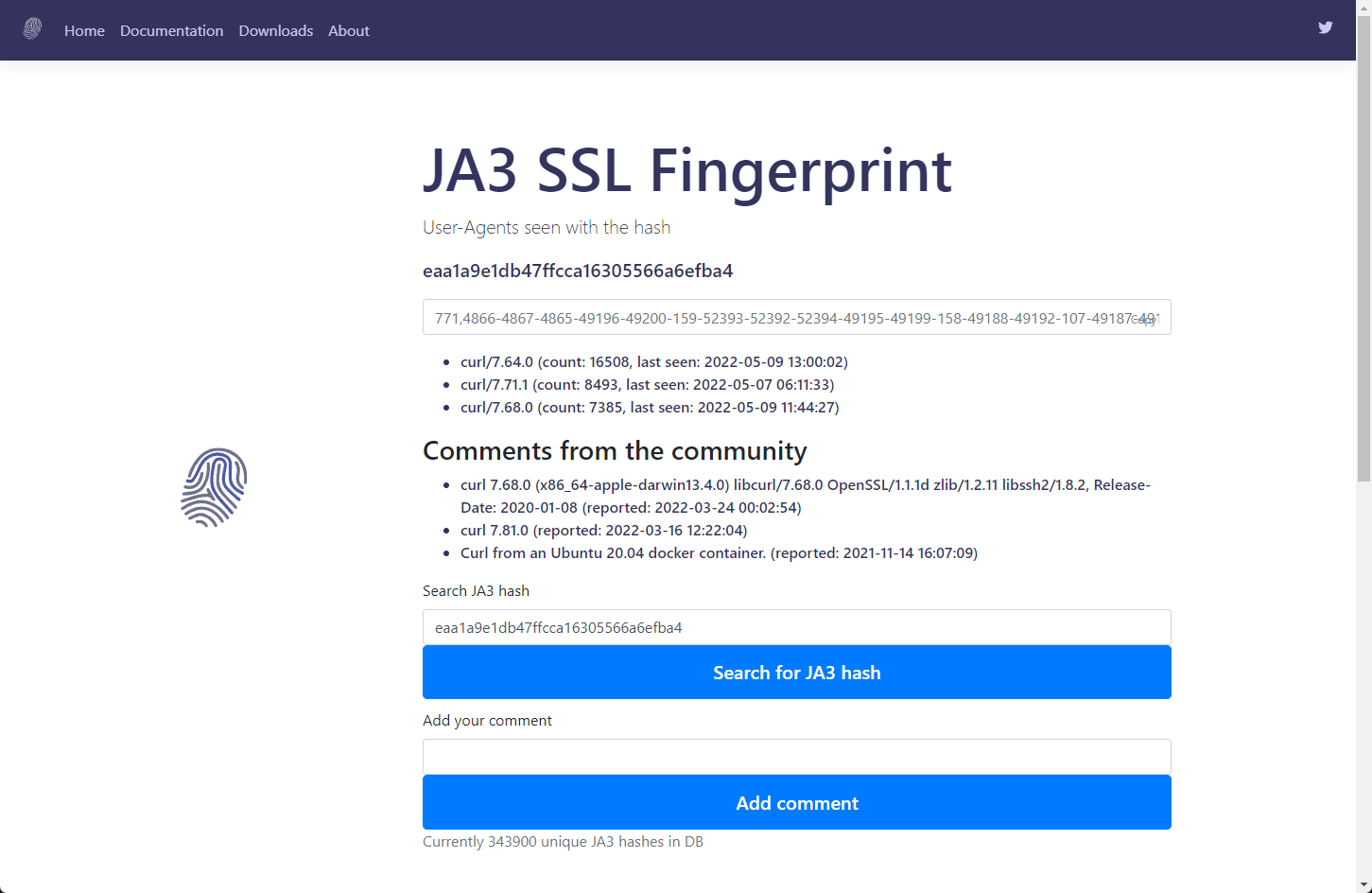

As I said before, there is no nice database for JA3 hashes applicable for bot detection yet. All we have for now is ja3er.com project.

Pros:

- you can download entire database just from website in two options: by hash or by User-Agent

- community can add comments to existing hashes

Cons:

- hashes are tied to User-Agent header, which is fully controlled by user. E.g. I can use curl with -A option or slight python script modification to easily change it.

- you can operate only two fields: hash and fingerprint, missing list of ciphers, elliptic curves, protocol version etc.

- community can add comments to existing hashes with fake data, so not sure which user-agents are real and which are changed by scripts/tools.

- not sure if hashes are calculated correctly: for same browser I got different hashes with ja3er, nginx-ssl-ja3 and haproxy (which we think is correct one).

In general, it is a good one to distinguish different users, but it does not cover bots detection use case.

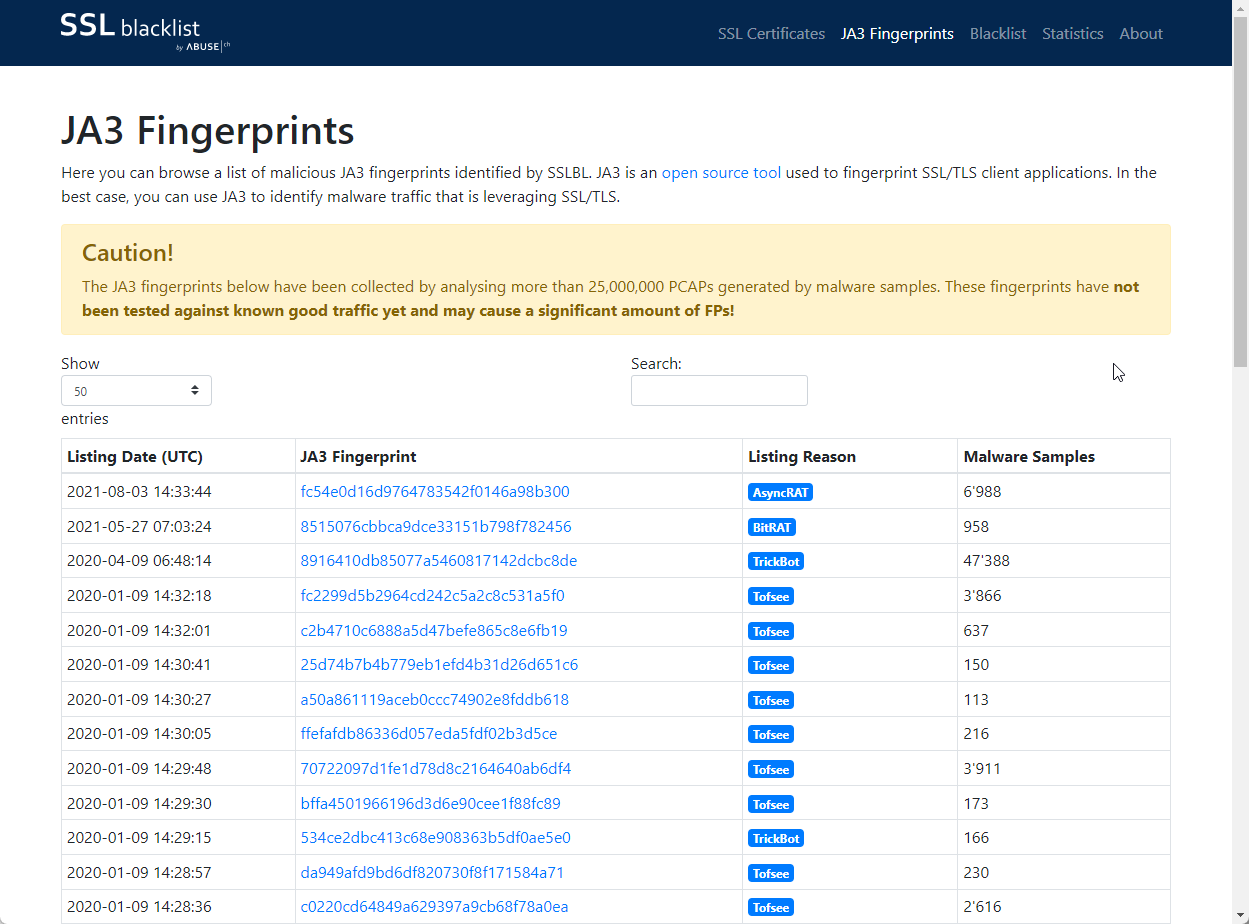

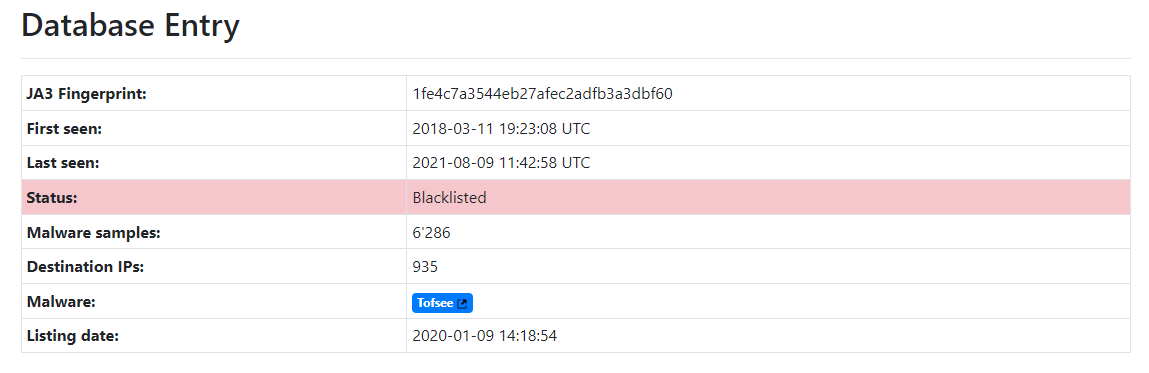

The second one I found is abuse.ch - curated list of JA3 hashes which were spotted in malware activity. I believe such blacklist should work in theory, however if payload is not related to SSL protocol itself, most likely attacker would use some default tool/lib familiar to him to perform attack.

I have checked listed hashes through DB and indeed, several hashes are related to default tools:

- a50a861119aceb0ccc74902e8fddb618 => curl 7.21.0 on debian 6

- c0220cd64849a629397a9cb68f78a0ea => nodejs 10 (and multiple other versions) with axios 0.27.2 (and multiple other versions)

- e7643725fcff971e3051fe0e47fc2c71 => java 8u161/8u162 with apachehttpclient 4.5 (and multiple other versions)

- 1fe4c7a3544eb27afec2adfb3a3dbf60 => curl 7.60.0 on opensuse 15.0/15.1

- 40adfd923eb82b89d8836ba37a19bca1 => wget 1.19.4 on ubuntu 18.04

- d2935c58fe676744fecc8614ee5356c7 => java 8u221 (and multiple other versions) with apachehttpclient 4.5 (and multiple other versions)

- 911479ac8a0813ed1241b3686ccdade9 => python 2.7.9 with requests 2.11.0 (and mutiple other versions) on debian 8

- e3b2ab1f9a56f2fb4c9248f2f41631fa => curl 7.38.0 on debian 8

8 of 96 (8%) hashes were related to legit tools or default libraries. I can't say for sure yet, if any other hashes in blacklist are related to some other common tools, nor if they are definitely not (I just start to collect hashes, no wonder my DB is too small yet). But anyway, finding even single legal fingerprint in blacklist is a small wakeup call not to blindly trust it.

What do we want? JA3 database!

Let's decide first, what we are going to do and expect as result:

- make some page which would return client JA3 info

- make API for database

- check every possible combination of default clients and store its hashes to database

- make some UI where user can check hash via database or send its client hash to DB

Opening toolbox

Ja3 info page

This one is easy, just can take default haproxy image and have to build nginx with nginx-ssl-ja3 module.

You can check your client hash by requesting these links:

- https://check.ja3.zone/ (haproxy, I consider it as default one, since it calculates hashes correctly)

- https://check.ja3.zone:8443/ (nginx with nginx-ssl-ja3)

Each service will return all possible values related to JA3. (haproxy variable => nginx variable => key in output JSON => description):

- %[ssl_fc_protocol_hello_id],%[ssl_fc_cipherlist_bin(1),be2dec(-,2)],%[ssl_fc_extlist_bin(1),be2dec(-,2)],%[ssl_fc_eclist_bin(1),be2dec(-,2)],%[ssl_fc_ecformats_bin,be2dec(-,1)] => $http_ssl_ja3 => fingerprint => just you know… fingerprint we talked a while back

- %[fingerprint,digest(md5),hex,lower] => $http_ssl_ja3_hash => hash => md5 hash of fingerprint

- %[ssl_fc_cipherlist_str(1)] => $ssl_ciphers => ciphers => human-readable ciphers list

- %[ssl_fc_eclist_bin(1),be2hex(:,2)] => $ssl_curves => curves => human-readable elliptic curves

- %[ssl_fc_protocol] => $ssl_protocol => protocol => human-readable protocol name

- %[req.fhdr(user-agent)] => $http_user_agent => user_agent => client user-agent header

Pre-build image is available on docker hub:

- wafninja/ja3-haproxy (recommended)

- wafninja/ja3-nginx

Here comes… THE database

I will skip the part of writing some code and focus on fields, we are going to use for client fingerprint. Since we are going to send all requests by ourselves, we'd know for sure:

- which OS is used (e.g. ubuntu 20.04)

- which language and library was used (e.g. python3 aiohttp==0.21.6)

- what hash we got by requesting JA3 info page

All these checks should be reproduceable, so one can trust all the data we have collected.

Let's imagine for a moment, that we already have collected all the data for all possible tools, OS and its combinations. What could we do with that?

For example, if we select fingerprints that could be used by bad bots/scripts (e.g., python) and exclude fingerprints related to good ones (e.g., chrome). Then we can form JA3 blacklist and use it on some IPS/WAF. Looks like a nice goal to achieve.

So little left to do: just fill the database with data already! Last time I stopped right here, since thinking of all possible combinations of OS/interpreter/libraries/versions made my head hurt. But now, success is near… I feel it…

mmm… More DATA

The most difficult part on this one is to figure out, how to start and what are JA3 fingerprint dependencies. Last time I considered, that with different OpenSSL version I'd get completely different results, but noticed, that it does not affect much and in most cases hashes are same. So, I removed SSL version from collected metrics.

Start strong, finish stronger. But at first:

- Which OS to choose?

The easiest way to deploy it is docker, so let's take some default images of ubuntu, debian, alpine etc. and use them. Besides all checks can be automated via SDK by scripts - Which interpreter to choose?

I decided to check tools like curl, wget from default repository and start with python (both 2 and 3), java, golang and add some checks on legit browsers like firefox or chrome. - Which libraries to choose?

Ideally? All of them, but for start I have chosen most popular ones. E.g.

For golang default net/http library

For java - all possible versions of JDK I have found

For python it was aiohttp, grequests, http.client, httplib, httplib2, httpx, hyper, python_http_client, requests, treq, uplink, urllib.request, urllib2, urllib3 - Which libraries versions should we check?

Well… Just all of them

Let's say we decided to test ubuntu OS, checklist would look something like this:

- Get tag list from docker registry. For each OS version: build image with all test environment dependencies. On each of them execute tests described below.

- For each interpreter make test script/command to retrieve hash from info JA3 page. For python for each library, we get list of possible versions and try to run test script with each of them. Same for java - switch to different JDK versions and compile/run test scripts with each lib version.

- In the end test results are enriched with info on OS, interpreter, version and are sent to API to store them in DB.

One does not simply create a UI

Actually, comparing with the rest of tasks, this one was pretty much simple. If you have read this far, you should check it there: ja3.zone

Of course, there are lot more features I would like to add, but for now you can:

- search database by JA3 hash

- report your browser JA3 hash to database right from website

Hooray! I'm useful!

In conclusion, I would like to share some data on how many bots we are able to detect for now.

As source of bots JA3 hashes I used honeypot. It logs all the traffic in elastisearch database (JA3 hashes included). Then I checked all hashes from honeypot data and tried to find them in our newly created database.

Unfortunately, it's not all that smooth…

For a long time, honeypot was working with nginx-ssl-ja3, which was updated from time to time. That's why not sure if hashes were calculated correctly, nor if their calculation mechanism has changed from time to time.

I have switched honeypots to haproxy just now and obviously, there is not enough data to make any conclusion. Anyway, I will do two comparisons - nginx-ssl-ja3 hashes, collected over the years (even if they were calculated wrong or the calculation mechanism might change) and haproxy hashes that I get for several days.

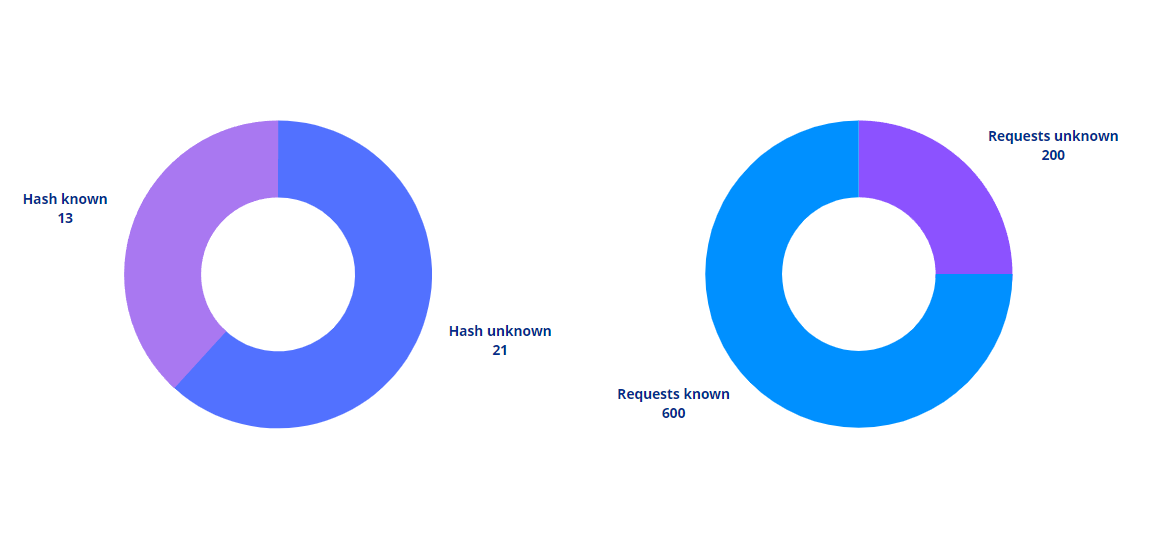

nginx-ssl-ja3 saw 769 hahes and 111 are present in current database: 14.43%. By traffic: among ~300k requests we have detected ~65k: 21%.

haproxy saw 34 hashes and 13 are present in current database: 38.23%. By traffic: among ~800 requests we have detected ~600: 76%.

So, let's consider the lowest result as current baseline and say that for now 20% of bot activity could be found in database. I know, I know. It could be better if I would increase languages/libraries checklist.

But do not worry, that's not the end of the story, since I plan to continue work on database enrichment, so it should get better and better in time.